altair-viz / vega_datasets Goto Github PK

View Code? Open in Web Editor NEWA Python package for online & offline access to vega datasets

License: MIT License

A Python package for online & offline access to vega datasets

License: MIT License

Hello,

vega_datasets is my go to source for quick data to try things out. A couple datasets I use often including but not limited to: birdstrikes, climate are returning HTTP Error 404: Not Found. Any suggestions?

Nice work on vega_datasets and altair! 😃

It would be great for the entire world airports dataset to be included in vega_datasets, not just a subset for those in the USA. It would make for many more interesting visualization possibilities, starting with this:

alt.Chart(world_airports[:5000]).mark_bar().encode(

x='count()',

y='Country:N'

)

and then filtering by country, timezone etc.

This dataset is around 8000 rows, which would also serve the useful purpose of demonstrating how to handle datasets longer than Altair's default limit of 5000 rows. This limit is likely the first hurdle most people using Altair for real datasets will have to surmount. (I'd also gladly volunteer to help to make handling of large datasets more seamless in Altair...)

Example URLs:

We can add local datasets if

Adding a dataset to the package is easy:

python tools/download_datasets.pyThings are broken after moving the repo to altair-viz.

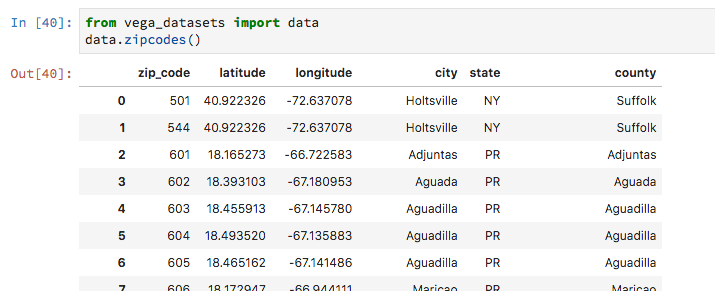

from vega_datasets import data

zipcodes = data.zipcodes()

print(zipcodes.zip_code.dtype)Expected: dtype('O') or rather CategoricalDtype(categories=['00501', '00544', ....

Actual: dtype('int64')

Some ZIP codes starts with "0" and zipcodes = data.zipcodes() removes all preceding zeros. The following works, but I think it's better to return with the correct dtypes by default.

zipcodes = data.zipcodes(dtype={'zip_code': 'category'})Also found that data.unemployment() cannot correctly parse the data. One should specify the separator data.unemployment(sep='\t').

The vega/vega-datasets repository recently released a major update. A few changes will need to be made to catch up. I just wanted to file this issue to get the ball rolling.

I can work on it and submit a PR when I get a bit of free time :)

In vega/vega-datasets they recommend using a CDN with a fixed version to access the URLs for a dataset such as

https://cdn.jsdelivr.net/npm/[email protected]/data/cars.json

instead of

https://vega.github.io/vega-datasets/data/cars.json

I was wondering if we should make this change as well?

I think modifications would take place here:

vega_datasets/vega_datasets/core.py

Line 94 in 70d6829

but it would be cool if it could grab the correct version number from

Anyways, just an idea moving forward to try to make things more stable

I am getting the following test failures with pandas 0.25.0 that didn't occur with earlier versions of pandas:

=================================== FAILURES ===================================

____________________________ test_iris_column_names ____________________________

def test_iris_column_names():

iris = data.iris()

assert type(iris) is pd.DataFrame

> assert tuple(iris.columns) == ('petalLength', 'petalWidth', 'sepalLength',

'sepalWidth', 'species')

E AssertionError: assert ('sepalLength...h', 'species') == ('petalLength'...h', 'species')

E At index 0 diff: 'sepalLength' != 'petalLength'

E Use -v to get the full diff

vega_datasets/tests/test_local_datasets.py:32: AssertionError

____________________________ test_cars_column_names ____________________________

def test_cars_column_names():

cars = data.cars()

assert type(cars) is pd.DataFrame

> assert tuple(cars.columns) == ('Acceleration', 'Cylinders', 'Displacement',

'Horsepower', 'Miles_per_Gallon', 'Name',

'Origin', 'Weight_in_lbs', 'Year')

E AssertionError: assert ('Name', 'Mil..._in_lbs', ...) == ('Acceleration..., 'Name', ...)

E At index 0 diff: 'Name' != 'Acceleration'

E Use -v to get the full diff

vega_datasets/tests/test_local_datasets.py:51: AssertionError

When the zipcodes data is loaded, it returns a dataframe with integer values for the zip_code column.

These values should be five-character strings. Is there a reasonable way to make this package control for that?

in the meantime, this is a simple workaround:

df['zip_code'] = df['zip_code'].apply(lambda x: str(x).zfill(5))

Recently there was a new dataset added to vega/vega-datasets

sp500-2000.csv - S&P 500 index values from 2000 to 2020, retrieved from Yahoo Finance.

Making a note here to add this dataset once #39 is done.

Some datasets like ffox are images (.png) and thus throw a ValueError

To reproduce in vega-datasets-0.9.0 (current version on pip):

from vega_datasets import data

data.ffox()Raises:

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

c:\Users\redacted\Repositories\altair-demos\basic_chart.py in <module>

----> 1 data.ffox()

~\AppData\Local\Programs\Python\Python39\lib\site-packages\vega_datasets\core.py in __call__(self, use_local, **kwargs)

244 return pd.read_csv(datasource, **kwds)

245 else:

--> 246 raise ValueError(

247 "Unrecognized file format: {0}. "

248 "Valid options are ['json', 'csv', 'tsv']."

ValueError: Unrecognized file format: png. Valid options are ['json', 'csv', 'tsv'].The data in the altair repository is inconsistent with the vega data:

https://github.com/altair-viz/vega_datasets/blob/master/vega_datasets/_data/seattle-weather.csv

https://github.com/vega/vega/blob/main/docs/data/seattle-weather.csv

The vega data makes more sense, since it seems that the altair version of the dataset barely has any rain for 2015. Check 2015-01-02 for an example of an inconsistent field, but there are MANY.

I got some errors when trying to read the 'miserables', 'us-10m', and 'world-110m' datasets.

For 'miserables' it read:

ValueError: arrays must all be same length

and for 'us-10m' and 'world-110m' it read:

ValueError: Mixing dicts with non-Series may lead to ambiguous ordering.

Please forgive me as I am a Python "newbie" and may be asking an ignorant question.

I would like to be able to use the form:

import vega_datasets.data as datainstead of

from vega_datasets import dataMy motivation is that I can use something analogous to the first form when using the import() function in the R reticulate package.

If I try this (in Python):

from vega_datasets import data

dir(data)I get (as I expect):

['7zip',

'airports',

'anscombe',

'barley',

...

'zipcodes']

However, if I try this:

import vega_datasets.data as data2

dir(data2)I get:

['__doc__', '__loader__', '__name__', '__package__', '__path__', '__spec__']

whereas I am hoping to replicate the first behavior.

By contrast, this works as I expect:

import scipy.stats as stats

dir(stats)Question: could it be possible for import vega_datasets.data as data to work like from vega_datasets import data?

Thanks!

It appears that are two datasets called weather in the vega/vega-datasets repo:

Currently the altair-viz/vega_datasets only includes weather.json.

To add weather.csv do I just add an entry to vega_datasets/datasets.json?

Also any thoughts on what to name the two?

A declarative, efficient, and flexible JavaScript library for building user interfaces.

🖖 Vue.js is a progressive, incrementally-adoptable JavaScript framework for building UI on the web.

TypeScript is a superset of JavaScript that compiles to clean JavaScript output.

An Open Source Machine Learning Framework for Everyone

The Web framework for perfectionists with deadlines.

A PHP framework for web artisans

Bring data to life with SVG, Canvas and HTML. 📊📈🎉

JavaScript (JS) is a lightweight interpreted programming language with first-class functions.

Some thing interesting about web. New door for the world.

A server is a program made to process requests and deliver data to clients.

Machine learning is a way of modeling and interpreting data that allows a piece of software to respond intelligently.

Some thing interesting about visualization, use data art

Some thing interesting about game, make everyone happy.

We are working to build community through open source technology. NB: members must have two-factor auth.

Open source projects and samples from Microsoft.

Google ❤️ Open Source for everyone.

Alibaba Open Source for everyone

Data-Driven Documents codes.

China tencent open source team.