The Image Caption Generator is a powerful tool that combines computer vision and natural language processing to generate descriptive captions for images. This project leverages state-of-the-art deep learning techniques to automatically generate human-like captions that accurately depict the content of input images.

The ability to generate meaningful captions for images has numerous applications, including aiding visually impaired individuals, enhancing image search engines, and providing more context for images in various domains. This project aims to address this task by training a deep neural network to recognize the content of an image and generate a coherent caption that describes it.

-

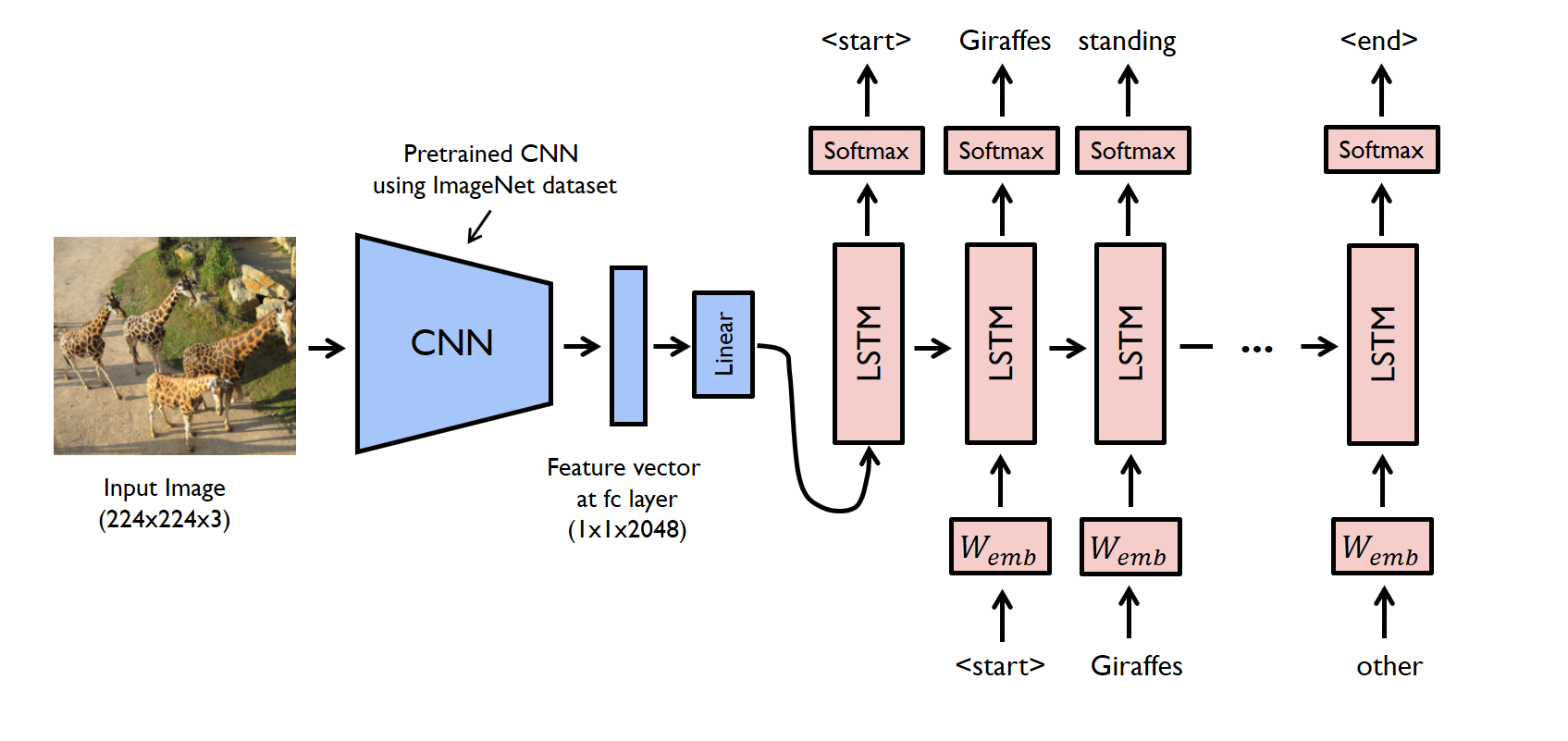

State-of-the-Art Model: The image caption generator utilizes a cutting-edge deep learning model that combines convolutional neural networks (CNNs) for image feature extraction and recurrent neural networks (RNNs) for language generation.

-

Easy-to-Use: The provided code and documentation make it simple to integrate the image caption generation capability into your own projects.

-

Customization: The model can be fine-tuned on your specific dataset to generate captions that are more aligned with your domain.

-

Clone this repository:

git clone https://github.com/your-username/Image_Caption_Generator.git

-

Navigate to the project directory:

cd Image_Caption_Generator -

Install the required dependencies:

pip install -r requirements.txt

-

Download the pre-trained model weights and place them in the appropriate directory.

-

Run the

generate_caption.pyscript and provide the path to the image you want to generate a caption for:python generate_caption.py --image_path path/to/your/image.jpg

-

The script will process the image and output a generated caption based on the content of the image.

The image caption generator employs a two-part architecture: a CNN-based image encoder and an RNN-based caption decoder. The image encoder extracts high-level features from the input image, while the caption decoder generates a sequence of words based on the extracted features.

For more details on the architecture and training process, refer to ModelArchitecture.md.

Here are some examples of captions generated by the model:

-

Input Image: A cat sleeping on a couch. Generated Caption: A fluffy cat lounging on the couch.

-

Input Image: A cityscape with tall skyscrapers. Generated Caption: Tall skyscrapers standing in the city skyline.

Contributions are welcome! If you find any issues or have suggestions for improvements, feel free to open a pull request.

This project is licensed under the MIT License.

Disclaimer: This project is developed for educational and research purposes.