I think this is a nice attempt at comparing different HTTP clients in different languages, but suffers from unfairness in regard to how those are invoked.

The problem is, that hyperfine repeatedly runs the binary from scratch, which puts Zig in a better light due to additional overhead in other languages. So most of the timings are not really timing the HTTP library, but instead the surrounding things:

- Python: It invokes the interpreter every time, meaning the time to parse and interpret the script is measured as well, not just the HTTP library call.

- Rust:

- In general, most of these crates have extensive features, including certificate loading, internal caching and what not. I don't know how much they do up-front and do on demand, but I'd expect there is quite some overhead in creating the client instances. Overall, they are likely to perform better on subsequent HTTP calls.

- Especially the async clients have an additional overhead by spinning up the Tokio runtime. You use the current thread version, which is more lightweight, but definitely has some setup overhead as well.

- Go: Similar to Rust, there is definitely some overhead for the runtime in the background, GC, and internal mechanics of the client.

- Curl: Probably same as with the Rust version, loading several things on startup that might not be needed for such a lightweight HTTP/1 call without certs.

Improving the setup

To mitigate some of the pitfalls in the other languages and making the comparison more fair, there are two attempts that immediately come to mind:

- Let each program print out its own timing.

- Run the HTTP request in a loop.

The first attempt actually came to mind after I implemented the second 😅.

I guess for most programs except curl it's pretty trivial. Get the timestamp before the request is sent, diff with the current timestamp after the request body was printed out, then log the duration.

But this would still not be fair, as it wouldn't take any optimizations for subsequent calls into account. Something like internal buffers that might be re-used and don't need another allocation on the next request.

So I went ahead with the second attempt (as said before actually my first idea and went with that, then realized we might log the timing by the program itself, while I was writing this).

Pretty easy attempt actually, just let each program repeatedly send the request in a loop several times, so we can mitigate some of the overhead from spinning things up. Would not be absolutely zero, but if run often enough, it could water down the overhead to get more comparable results that show the actual HTTP library performance.

My modifications

Overall, I did 3 modifications, 2 of them being Rust-specific.

- I added the

attohttpc crate into the pool, as it's a somewhat popular alternative to ureq. Have used it in the past several times, and it very much resembles the reqwest API, so I was curious how it performs.

- Many Rust programs use too many features, most of them unused. Probably most of them would be optimized away anyway, but it can't hurt to reduce the feature set. At least build times go down a bit.

2.1. Apply default-features = false to most dependencies, and reduce tokio and hyper features from full to the absolute minimum.

2.2. Do the async runtime setup manually, which is probably not having any performance impact, but again can reduce the dependency count for this simple setup.

- The most important change of adjusting each client to do repeated HTTP requests in a loop.

3.1. Set all loops to do 100 requests. I'd have loved to set it higher, but the Zig HTTP client was surprisingly slow when run in a loop.

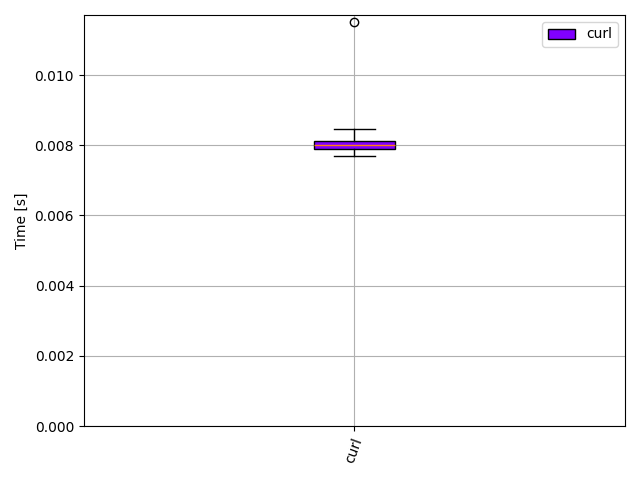

3.2. For curl it was a bit tricky as a shell loop wouldn't be fair. But I found a trick online.

My test results

So long story short, I ran the tests again after the mentioned adjustments and at least for me all the Rust programs were the fastest, closely followed by Go and cURL.

Most surprising was the slowdown in Zig. I'm not sure what's exactly the issue there. I'm not a Zig dev, and simply searched for how to do simple loops online. Probably I did something wrong there... or maybe it's really this slow and might improve a lot until the full 0.11 release 🤔

I'll open a PR with my modifications shortly, so maybe you can check that I don't do anything wrong, that might slow it down so much.

| Command |

Mean [s] |

Min [s] |

Max [s] |

Relative |

zig-http-client |

4.065 ± 0.003 |

4.062 |

4.069 |

619.47 ± 942.33 |

curl |

0.014 ± 0.003 |

0.010 |

0.021 |

2.12 ± 3.26 |

rust-attohttpc |

0.007 ± 0.010 |

0.004 |

0.082 |

1.01 ± 2.19 |

rust-hyper |

0.007 ± 0.011 |

0.004 |

0.092 |

1.05 ± 2.28 |

rust-reqwest |

0.007 ± 0.008 |

0.005 |

0.081 |

1.09 ± 2.02 |

rust-ureq |

0.007 ± 0.010 |

0.004 |

0.100 |

1.00 |

go-http-client |

0.010 ± 0.005 |

0.007 |

0.094 |

1.58 ± 2.53 |

python-http-client |

0.092 ± 0.002 |

0.090 |

0.099 |

13.98 ± 21.26 |