This repository contains the code to reproduce the results of the paper Direction of Arrival with One Microphone, a few LEGOs, and Non-Negative Matrix Factorization.

Conventional approaches to sound source localization require at least two microphones. It is known, however, that people with unilateral hearing loss can also localize sounds. Monaural localization is possible thanks to the scattering by the head, though it hinges on learning the spectra of the various sources. We take inspiration from this human ability to propose algorithms for accurate sound source localization using a single microphone embedded in an arbitrary scattering structure. The structure modifies the frequency response of the microphone in a direction-dependent way giving each direction a signature. While knowing those signatures is sufficient to localize sources of white noise, localizing speech is much more challenging: it is an ill-posed inverse problem which we regularize by prior knowledge in the form of learned non-negative dictionaries. We demonstrate a monaural speech localization algorithm based on non-negative matrix factorization that does not depend on sophisticated, designed scatterers. In fact, we show experimental results with ad hoc scatterers made of LEGO bricks. Even with these rudimentary structures we can accurately localize arbitrary speakers; that is, we do not need to learn the dictionary for the particular speaker to be localized. Finally, we discuss multi-source localization and the related limitations of our approach.

Dalia El Badawy and Ivan Dokmanić

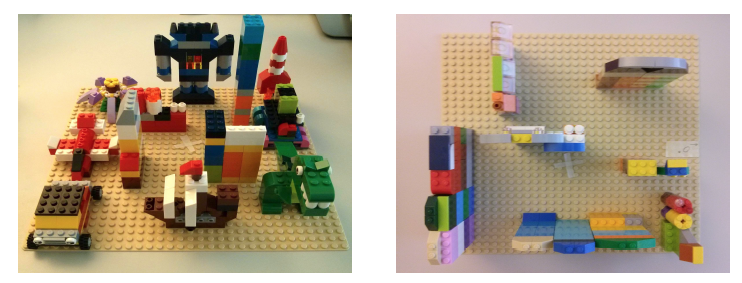

The devices made from LEGO bricks are placed on a base plate of size 25 cm by 25 cm. Left: LEGO1. Right: LEGO2.

The impulse responses measured in an anechoic chamber are stored in data as numpy arrays sampled at 16000 Hz. Each column corresponds to one direction.

- Python 2.7.

- Numpy, Protocol Buffers, Seaborn, and Matplotlib.

Each experiment is governed by a configuration file specifying which device to use, the spatial discretization, ... etc. The structure of the file and all the parameters are specified in proto/info.proto.

The configuration files used in the paper can be found in configs.

We use speech files from the TIMIT database stored as numpy arrays (the script preread.py does the conversion from wav file to .npy). The configuration files in configs assume the data is located in a subfolder of data, for e.g., data/test/female/.

There are three main scripts:

- exp_white.py: for results with white sources

- exp_proto.py: for results using a dictionary of spectral prototypes

- exp.py: for results using a universal speech model (USM)

Each script takes as input a configuration file. For example, in a UNIX terminal, run the following

python exp.py configs/lego1_fspeech_1.txt

A log file, figure, and text file with the results are generated in a subdirectory of results.