We present network definition and weights for our second place solution in CVPR 2018 DeepGlobe Building Extraction Challenge.

Vladimir Iglovikov, Selim Seferbekov, Alexandr Buslaev, Alexey Shvets

If you find this work useful for your publications, please consider citing:

@article{2018arXiv180600844I,

author = {Iglovikov, V.~I. and Seferbekov, S. and Buslaev, A.~V. and Shvets, A.},

title = "{TernausNetV2: Fully Convolutional Network for Instance Segmentation}",

journal = {ArXiv e-prints},

eprint = {1806.00844},

year = 2018

}

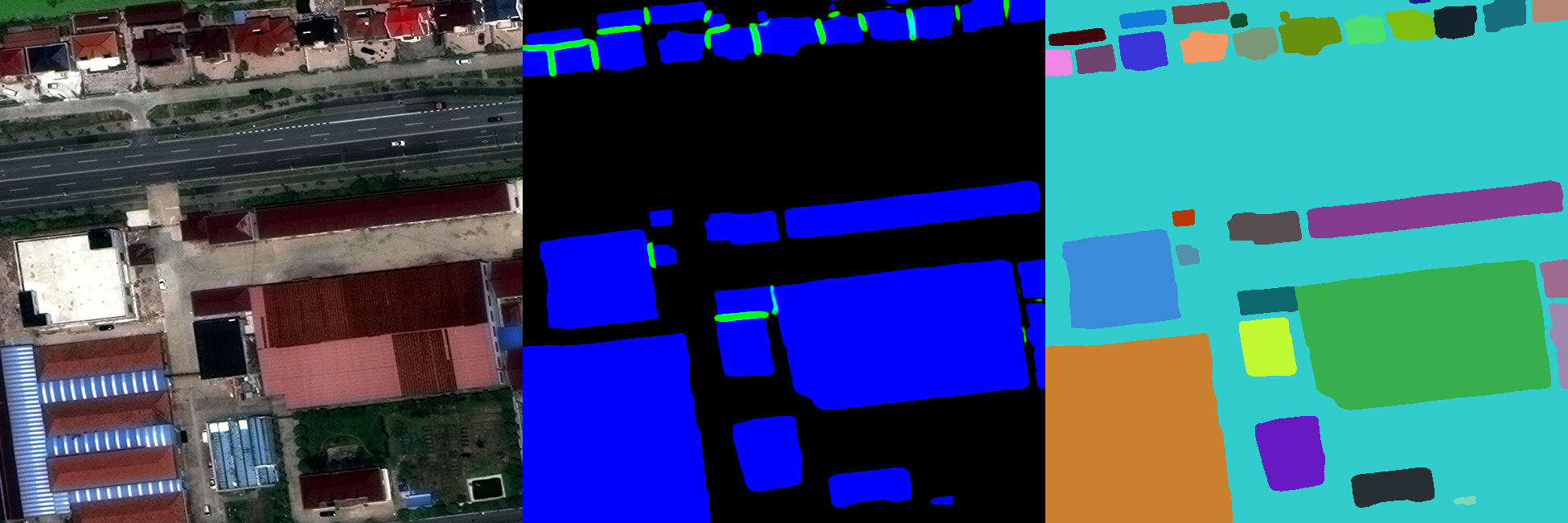

Automatic building detection in urban areas is an important task that creates new opportunities for large scale urban planning, and population monitoring. In a CVPR 2018 Deepglobe Building Extraction Challenge participants were asked to create algorithms that would be able to perform binary instance segmentation of the building footprints from satellite imagery. Our team finished second and in this work we share the description of our approach, network weights and code that is sufficient for inference. of the main challenges is to correctly detect an instrument's position for the tracking and pose estimation in the vicinity of surgical scenes. Accurate pixel-wise instrument segmentation is needed to address this challenge. Our approach demonstrates an improvement over the state-of-the-art results using several novel deep neural network architectures. It addressed the binary segmentation problem, where every pixel in an image is labeled as an instrument or background from the surgery video feed. In addition, we solve a multi-class segmentation problem, in which we distinguish between different instruments or different parts of an instrument from the background. In this setting, our approach outperforms other methods in every task subcategory for automatic instrument segmentation thereby providing state-of-the-art results for these problems.

The training data for the building detection subchallenge originate from the SpaceNet dataset. The dataset uses satellite imagery with 30 cm resolution collected from DigitalGlobe’s WorldView-3 satellite. Each image has 650x650 pixels size and covers 195x195 m2 of the earth surface. Moreover, each region consists of high-resolution RGB, panchromatic, and 8-channel low-resolution multi-spectral images. The satellite data comes from 4 different cities: Vegas, Paris, Shanghai, and Khartoum with different coverage, of (3831, 1148, 4582, 1012) images in the train and (1282, 381, 1528, 336) images in the test sets correspondingly.

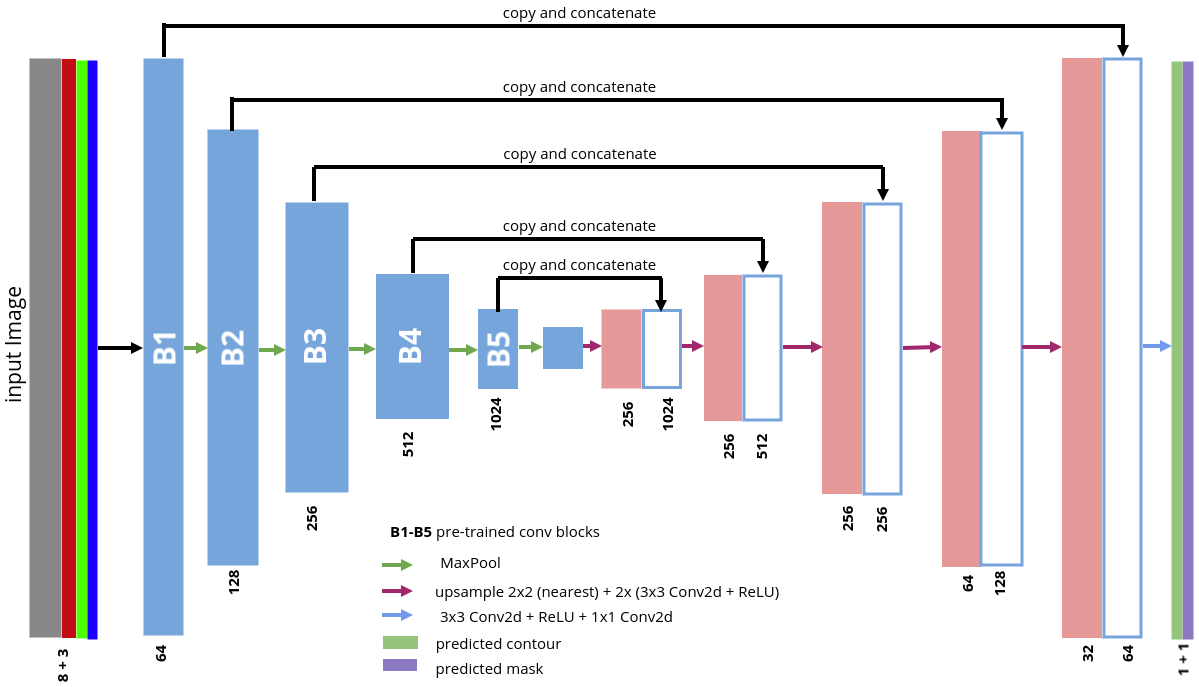

- The originial TernausNet was extened in a few ways:

- The encoder was replaced with WideResnet 38 that has In-Place Activated BatchNorm.

- The input to the network was extended to work with 11 input channels. Three for RGB and eight for multispectral data.

In order to make our network to perform instance segmentation, we utilized the idea that was proposed and successfully executed by Alexandr Buslaev, Selim Seferbekov and Victor Durnov in their winning solutions of the Urban 3d and Data Science Bowl 2018 challenges.

- Output of the network was modified to predict both the binary mask in which we predict building / non building classes on the pixel level and binary mask in which we predict areas of an image where different objects touch or very close to each other. These predicted masks are combined and used as an input to the watershed transform.

Result on the public and private leaderboard with respect to the metric that was used by the organizers of the CVPR 2018 DeepGlobe Building Extraction Challenge.

Results per city| City: | Public Leaderboard | Private Leaderboard |

|---|---|---|

| Vegas | 0.891 | 0.892 |

| Paris | 0.781 | 0.756 |

| Shanghai | 0.680 | 0.687 |

| Khartoum | 0.603 | 0.608 |

| Average | 0.739 | 0.736 |

- Python 3.6

- PyTorch 0.4

- numpy 1.14.0

- opencv-python 3.3.0.10

- You can easily start using our network and weights, following the demonstration example

- demo.ipynb