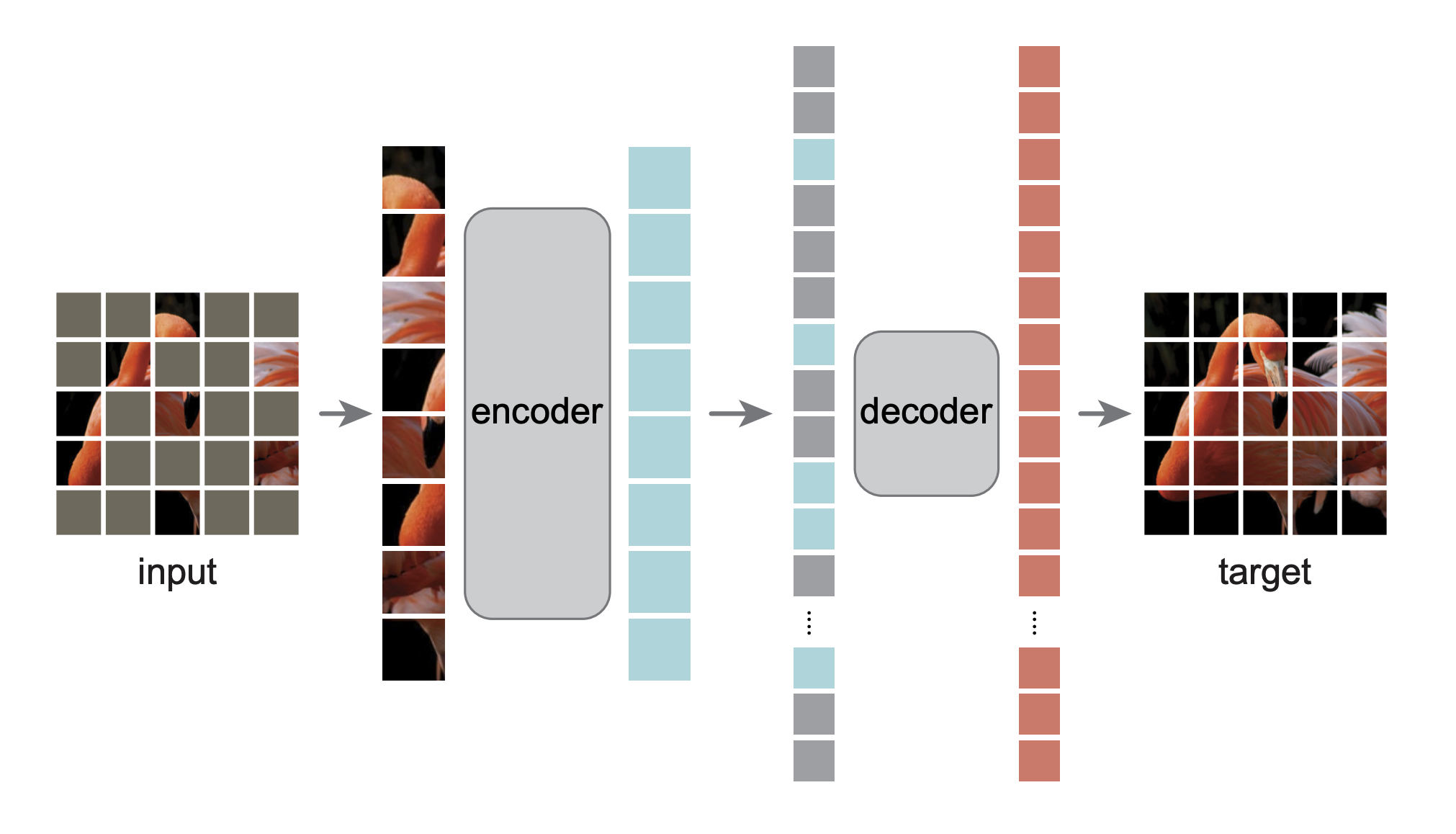

MAE can be trained self-supervised and then fine tuned for downstream tasks like image classification.

- Original repo

- FAQ

- Google Colab link

- [Docker Link - TBD]

1. What is unique about this fork?

This is just a repdroduction of the orignal repo demo in notebook

2. Why reproduce and freeze an existing result?

Reproduction and freezing addresses the problem of Github repos (including their notebooks) breaking over time due to updates on the dependent packages. This problem is circumvented by taking the environment snapshot of a working version

The notebook downloads a working environment snapshot (made using conda-pack), including all required models. The docker version is essentially the same environment packaged in a container.

3. What are the limitations?

1. Conda-pack was performed on Ubuntu 20.04.4 LTS. The target OS needs to be the same for the notebook to work. This limitation is not there for the docker container even though conda-pack is used in its creation, given the container abstraction wrapped around it

2. Reproducibility is achieved by using conda-packed enviromment which needs to be run prior to execution of any code in the repository. This imposes a level of indirection in interactive coding in the notebook. Edits to python code needs to be made in a python file. The notebook cell merely serves as a command line interface to execute the python file or function.

This is a PyTorch/GPU re-implementation of the paper Masked Autoencoders Are Scalable Vision Learners:

@Article{MaskedAutoencoders2021,

author = {Kaiming He and Xinlei Chen and Saining Xie and Yanghao Li and Piotr Doll{\'a}r and Ross Girshick},

journal = {arXiv:2111.06377},

title = {Masked Autoencoders Are Scalable Vision Learners},

year = {2021},

}

-

The original implementation was in TensorFlow+TPU. This re-implementation is in PyTorch+GPU.

-

This repo is a modification on the DeiT repo. Installation and preparation follow that repo.

-

This repo is based on

timm==0.3.2, for which a fix is needed to work with PyTorch 1.8.1+.

- Visualization demo

- Pre-trained checkpoints + fine-tuning code

- Pre-training code

Run our interactive visualization demo using Colab notebook (no GPU needed):

The following table provides the pre-trained checkpoints used in the paper, converted from TF/TPU to PT/GPU:

| ViT-Base | ViT-Large | ViT-Huge | |

|---|---|---|---|

| pre-trained checkpoint | download | download | download |

| md5 | 8cad7c | b8b06e | 9bdbb0 |

The fine-tuning instruction is in FINETUNE.md.

By fine-tuning these pre-trained models, we rank #1 in these classification tasks (detailed in the paper):

| ViT-B | ViT-L | ViT-H | ViT-H448 | prev best | |

|---|---|---|---|---|---|

| ImageNet-1K (no external data) | 83.6 | 85.9 | 86.9 | 87.8 | 87.1 |

| following are evaluation of the same model weights (fine-tuned in original ImageNet-1K): | |||||

| ImageNet-Corruption (error rate) | 51.7 | 41.8 | 33.8 | 36.8 | 42.5 |

| ImageNet-Adversarial | 35.9 | 57.1 | 68.2 | 76.7 | 35.8 |

| ImageNet-Rendition | 48.3 | 59.9 | 64.4 | 66.5 | 48.7 |

| ImageNet-Sketch | 34.5 | 45.3 | 49.6 | 50.9 | 36.0 |

| following are transfer learning by fine-tuning the pre-trained MAE on the target dataset: | |||||

| iNaturalists 2017 | 70.5 | 75.7 | 79.3 | 83.4 | 75.4 |

| iNaturalists 2018 | 75.4 | 80.1 | 83.0 | 86.8 | 81.2 |

| iNaturalists 2019 | 80.5 | 83.4 | 85.7 | 88.3 | 84.1 |

| Places205 | 63.9 | 65.8 | 65.9 | 66.8 | 66.0 |

| Places365 | 57.9 | 59.4 | 59.8 | 60.3 | 58.0 |

The pre-training instruction is in PRETRAIN.md.

This project is under the CC-BY-NC 4.0 license. See LICENSE for details.