Distributed Training robotic-arm can move to target locations using actor-critic DDPG.

For this project, Training a double-jointed arm can move to target locations. A reward of +0.1 is provided for each step that the agent's hand is in the goal location. Thus, the goal of the agent is to maintain its position at the target location for as many time steps as possible.

The observation space consists of 33 variables corresponding to position, rotation, velocity, and angular velocities of the arm. Each action is a vector with four numbers, corresponding to torque applicable to two joints. Every entry in the action vector should be a number between -1 and 1.

Agents must get an average score of +30 (over 100 consecutive episodes, and over all agents).The environment is considered solved, when the average (over 100 episodes) of those average scores is at least +30.

Having multiple copies of the same agent sharing experience can accelerate learning.

Unity Machine Learning Agents (ML-Agents) is an open-source Unity plugin that enables simulations to serve as environments for training intelligent agents. For this project, work with the Reacher environment.

The Project is for Udacity Deep Reinforcement learning nd.

- Linux: click here

- Mac OSX: click here

- Windows (32-bit): click here

- Windows (64-bit): click here

-

Create (and activate) a new environment with Python 3.6.

- Linux or Mac:

conda create --name drlnd python=3.6 source activate drlnd- Windows:

conda create --name drlnd python=3.6 activate drlnd

-

Clone the repository (if you haven't already!), and navigate to the

python/folder. Then, install several dependencies.

git clone https://github.com/udacity/deep-reinforcement-learning.git

cd deep-reinforcement-learning/python

pip install .- Create an IPython kernel for the

drlndenvironment.

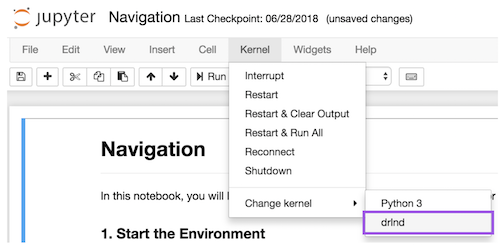

python -m ipykernel install --user --name drlnd --display-name "drlnd"- Before running code in a notebook, change the kernel to match the

drlndenvironment by using the drop-downKernelmenu.

conda activate drlnd

jupyter notebook

Then select Continuous_Control.ipynb and running

We use SemVer for versioning. For the versions available, see the tags on this repository.

- Continuous Control With Deep Reinforcement Learning(DDPG)

- Proximal Policy Optimization Algorithms(PPO)

- Asynchronous Methods for Deep Reinforcement Learning(A3C)

- Distributed Distributional Deterministic Policy Gradients(D4PG)

- Benchmarking Deep Reinforcement Learning for Continuous Control

- Openai Baselines

- Tom Ge - Fullstack egineer - github profile

This project is licensed under the MIT License